Big data has become a big part of our lives, and a researcher at the University of Texas at Arlington is looking to develop big data mining tools that could help scientists and physicians better predict clinical outcomes and lead to cures for such diseases as cancer.

According to a UTA release, the National Science Foundation has awarded a $1.32 million, four-year grant to Heng Huang, professor in the Computer Science and Engineering Department, to find biomarkers and phenotypic markers by which “image-omics,” data-based precision medical techniques, can be lead to better treatments.

“The long-term plan for this research is to be able to treat an individual based on his or her image-omics data,” Huang said. “For instance, if a patient’s tissue images change, how is that related to genomics? Or, how can we use multi-omics to detect mutations? Once we find the biomarkers in the data, we can provide precision medicine strategy to treat the illness, from determining life expectancy to tailoring medicines to an individual’s needs.”

“The long-term plan for this research is to be able to treat an individual based on his or her image-omics data.”

Heng Huang

Huang’s research will integrate multiple modalities of very large, complex patient data, the release said.

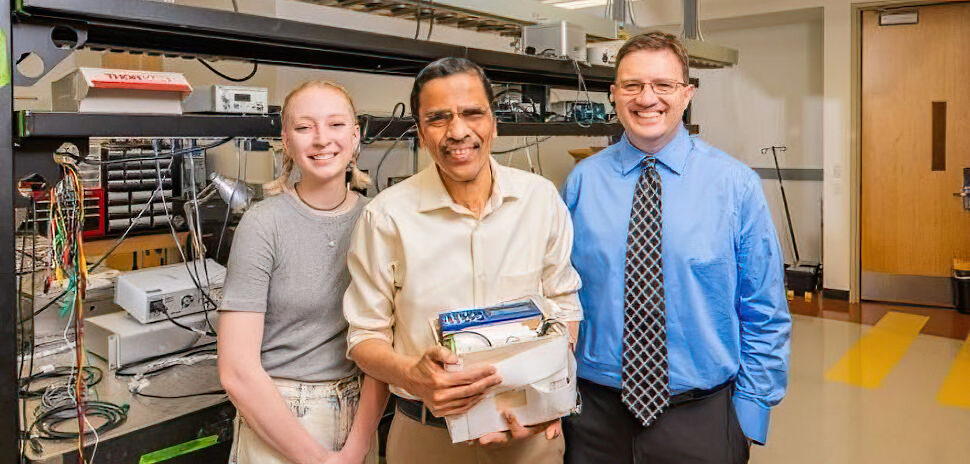

Co-principal investigators on the research are Chris Deng, a professor in the department, and Jia Rao, an assistant professor.

What is image-omics? Omics informally refers to a field of study in biology ending in -omics, such as genomics or proteomics.

According to the release, it includes image data such as pathological images, and omic data, such as DNA sequence, RNA expression, as well as genetic and genomic biomarkers taken from the same patient.

The release said that the data are at a very high resolution. For example, a pathological image could measure 1 million pixels by 1 million pixels.

Compare that to your cell phone images, which might measure 1,000 pixels by 1,000 pixels, the release said. Every piece of data compiled could be more than 30 gigabytes. Each gigabyte equals 1 billion bytes.

The massive data requires new concepts and enabling tools to handle their unprecedented scale and complexity, according to the release.

Delivering what’s new and next in Dallas-Fort Worth innovation, every day. Get the Dallas Innovates e-newsletter.